Florida launches criminal probe into ChatGPT over FSU shooting : NPR

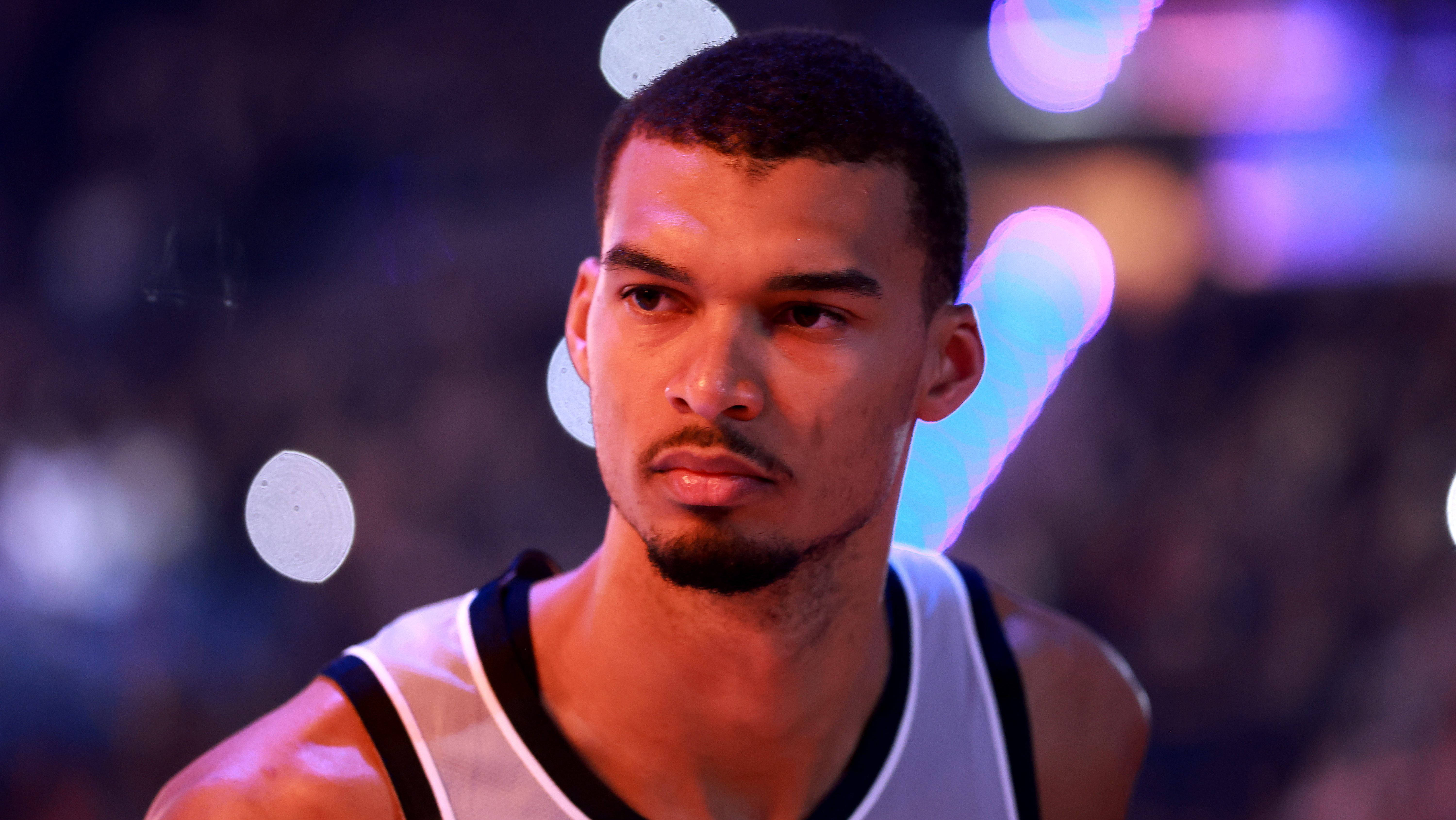

Police examine the scene of a shooting close to the scholar union at Florida State University on April 17, 2025 in Tallahassee, Florida. Two individuals have been killed and 5 injured within the assault. Florida’s lawyer basic is now investigating OpenAI as a result of the alleged shooter used ChatGPT to assist plan the assault.

Miguel J. Rodriguez Carrillo/Getty Images

cover caption

toggle caption

Miguel J. Rodriguez Carrillo/Getty Images

Florida’s lawyer basic is launching a criminal investigation into ChatGPT and its father or mother firm OpenAI over claims that the accused gunman in a shooting at Florida State University final yr consulted the AI chatbot earlier than killing two individuals and injuring 5 extra.

The Republican lawyer basic, James Uthmeier, mentioned at a press conference in Tampa on Tuesday that accused gunman Phoenix Ikner consulted ChatGPT for recommendation earlier than the shooting, together with what kind of gun to make use of, what ammunition went with it, and what time to go to campus to come across extra individuals, in line with an preliminary assessment of Ikner’s chat logs.

“My prosecutors have looked at this and they’ve told me, if it was a person on the other end of that screen, we would be charging them with murder,” Uthmeier mentioned. “We cannot have AI bots that are warning people on how to kill others.”

OpenAI spokesperson Kate Waters mentioned in a written assertion to NPR: “Last year’s mass shooting at Florida State University was a tragedy, but ChatGPT is not responsible for this terrible crime.” She mentioned the corporate reached out to share details about the alleged shooter’s account with regulation enforcement after the shooting and continues to cooperate with authorities.

Uthmeier’s workplace is issuing subpoenas to OpenAI in search of details about its insurance policies and inner coaching supplies associated to person threats of hurt and the way it cooperates with and stories crimes to regulation enforcement, courting again to March 2024. At the press convention, Uthmeier acknowledged the investigation is coming into into uncharted territory and is unsure about whether or not OpenAI has criminal legal responsibility.

“We are going to look at who knew what, designed what, or should have done what,” he mentioned. “And if it is clear that individuals knew that this type of dangerous behavior could take place, that these types of unfortunate, tragic events could take place, and yet still turned to profit, still allowed this business to operate, then people need to be held accountable.”

OpenAI’s Waters mentioned that the chatbot “provided factual responses to questions with information that could be found broadly across public sources on the internet, and it did not encourage or promote illegal or harmful activity.”

She continued: “ChatGPT is a general-purpose tool used by hundreds of millions of people every day for legitimate purposes. We work continuously to strengthen our safeguards to detect harmful intent, limit misuse, and respond appropriately when safety risks arise.”

Ikner, 21, is going through a number of prices of homicide and tried homicide for the April 2025 shooting close to the scholar union on FSU’s Tallahassee campus, the place he was a scholar on the time. His trial is ready to start on Oct. 19. According to court docket filings, greater than 200 AI messages have been entered into proof within the case.

Growing issues about AI chatbots

The Florida investigation comes amid rising issues over the function of AI chatbots in mass violence. Uthmeier had already introduced a civil investigation into ChatGPT’s role within the FSU shooting, which is ongoing, and attorneys for the household of one of many victims say they plan to sue OpenAI.

OpenAI is already going through a lawsuit from the household of a sufferer critically wounded in an assault in British Columbia in February 2026 that killed eight individuals and injured dozens extra. The alleged shooter mentioned gun violence eventualities with ChatGPT and was even banned from the platform months earlier than the shooting, however was in a position to evade detection and create one other account, OpenAI told Canadian authorities.

The Wall Street Journal reported that OpenAI’s inner methods flagged the account’s posts and staffers have been alarmed sufficient to contemplate alerting regulation enforcement, however that the corporate determined to not. OpenAI has said it’s making modifications to “strengthen” its protocol for referring accounts to regulation enforcement within the aftermath of the Canadian shooting.

Lawsuits are additionally mounting towards OpenAI and different makers of AI chatbots alleging they’ve contributed to mental health crises and suicides. (OpenAI has mentioned the instances are “an incredibly heartbreaking situation” and that it is working with psychological well being consultants to enhance how ChatGPT responds to indicators of psychological or emotional misery.)

A wrongful dying lawsuit filed against Google in March over the suicide of a Florida man accuses the corporate’s Gemini chatbot of pushing the person to “stage a mass casualty attack near the Miami International Airport [and] commit violence against innocent strangers,” in line with court documents.

In response to that lawsuit, Google mentioned: “Gemini is designed to not encourage real-world violence or suggest self-harm. Our models generally perform well in these types of challenging conversations and we devote significant resources to this, but unfortunately they’re not perfect.” The firm added that on this particular case, Gemini had “referred the individual to a crisis hotline many times.”